When AI Collapses Execution

HPP7: How to Overcome the New Constraints on Organizational Speed

This problem is complex, so I wrote this as an essay because the ideas build in sequence. If you’d prefer an overview, the main ideas, or actionable implications, here’s a custom GPT for that.

The first thing you learned leading the People function at a product company was don’t break the machine. The machine was the product development loop: engineers, designers, and PMs converting salaries and compute into features, continuously. Everything else in the organization existed in relation to that loop. HR’s job was to feed it with talent, retain it with culture, and stay well clear of the process itself. Production was expensive, slow, and fragile. Breaking it cost more than anything HR could deliver by touching it.

That constraint shaped how people leaders thought about their role. Strategy meant staying aligned with what product needed. Impact meant keeping it moving. The best HR leaders in high-growth companies weren’t the ones who redesigned the machine. They were the ones who understood it well enough to get its requests fulfilled and mostly get the hell out of the way.

AI has collapsed that logic. Production is now cheap, fast, and increasingly automated across engineering, marketing, GTM, and operations. The constraint has moved into the messy domains people teams have always struggled to influence: how decisions get made, how change gets absorbed, how to revise the governing assumptions that shape how all teams operate. The imperative has inverted. We can no longer leave the machine alone. We have to break it and rebuild.

This piece is about what that rebuilding will take.

I. Execution Is Collapsing

What AI is compressing across functions as production ceases to be the primary constraint.

II. Constraint Migrates Upstream

Why faster individual output doesn’t produce faster organizational outcomes.

III. The Constraint Roadmap

How coordination, observability, and adoption absorb AI gains before they reach organizational output.

IV. When the Frame Becomes the Constraint

How governing assumptions become a ceiling AI cannot raise.

V. The Politics of Frame Revision

Why revising the frame requires absorbing real loss and political cost.

VI. How the Frame Changes

The operational sequence that makes revision politically survivable.

VII. The New Speed

What determines advantage at each stage of constraint collapse.

VIII. What Comes Next

How agentic systems may compress organizational structure, and why revision capacity has to be built now.

I. Execution Is Collapsing

A November 2024 Lenny's survey of 1,750 product managers, designers, and engineers showed AI primarily used to produce artifacts. Half of engineers were using it to write code, a third of founders for decision support, over a fifth of PMs and designers for documentation and research. This was the most AI-forward cohort available. Even here, respondents were saving around four hours per week.

One month later, Andrej Karpathy marked a new threshold:

“Coding agents basically didn’t work before December and basically work since — the models have significantly higher quality, long-term coherence and tenacity and they can power through large and long tasks, well past enough that it is extremely disruptive to the default programming workflow… You’re not typing computer code into an editor like the way things were since computers were invented, that era is over. You’re spinning up AI agents, giving them tasks in English and managing and reviewing their work in parallel.”

Peter Steinberger, creator of OpenClaw, described on The Pragmatic Engineer what this looks like operationally. Agents compile, lint, test, and validate their own work without constant human intervention. Prompts and plans replace pull requests as the durable unit of work. Multiple agents run simultaneously while humans scope tasks, sequence work, and resolve trade-offs. Code production ceases to be the primary constraint in engineering.

Outside engineering the same compression is underway, though unevenly. In execution-heavy, measurement-rich environments the production constraint has disappeared. Outbound prospecting, list enrichment, sequencing, and paid media optimization are now tractable with agents. The prospecting and sequencing layers of BDR work can be scaled at near-zero marginal cost. Performance marketing already operated inside algorithmic systems, and AI closes the remaining loops on creative generation, variant testing, and conversion optimization. Campaign production, audience segmentation, and performance reporting have compressed across marketing.

Enterprise sales has been protected by the layer that agents cannot replicate: relationship capital, credibility, and judgment accumulated over years. But the administrative layer surrounding those cycles is being stripped away. Walmart uses Pactum to run autonomous supplier negotiations across thousands of contracts simultaneously. As buyer incentives steer toward automation, sellers will follow.

In HR, the compression is similar but slightly delayed. AI sourcing tools run continuous candidate identification, enrichment, and outreach sequencing without recruiter throughput. AI screening now handles first-round qualification at scale, conducting, scoring, and scheduling conversations without human involvement until later stages. Onboarding platforms automatically resolve new hire document completion, system access requests, and day-one logistics. Frontline support tools now resolve the vast majority of employee and manager service requests without escalation. Policy and offer letter drafting runs from prompts if not triggered from system stages automatically. The execution layer of the people function is collapsing. The people function’s identity and operating model were built around supporting this execution.

For most people leaders, even in companies that describe themselves as AI-native, the world still looks surprisingly similar to a year ago: a few GenAI tools, a lot of pressure to produce an AI strategy, and a function that still runs on the same throughput model it always has. That window is closing.

II. Constraint Migrates Upstream

By mid-2025, AI was writing around 80% of code at Anthropic, a threshold most engineering organizations are still approaching. This did not make software production frictionless. It increased throughput significantly and at the same time showed where the next constraints would bind further growth.

Mike Krieger, Anthropic’s CPO, described what followed:

“We very rapidly became bottlenecked on things like our merge queue — the line to get your change accepted and deployed. We had to re-architect it because so much more code was being written. I started finding new bottlenecks everywhere. There’s an upstream bottleneck in decision-making and alignment. Then, once building is happening, there are coordination bottlenecks — making sure teams don’t step on each other’s toes. And when work is ready to ship, there’s air-traffic control: landing changes, coordinating releases, figuring out strategy.”

Krieger explicitly describes Goldratt’s central insight from The Goal: system throughput is determined by its slowest constraint, not by the average performance of its parts. Further pressure increases how much the new constraint binds. Anthropic didn’t solve organizational speed by automating code production. It revealed the next set of constraints by removing the one that had been masking them.

Anthropic is the most favorable possible case for this transition: hypergrowth trajectory, highest AI adoption in the industry, ideologically aligned leadership that understands the problem as it unfolds, no legacy systems accumulating drag. If constraint migration is this visible and this disruptive there, it will be slower to recognize and harder to resolve in organizations carrying heavier architectures and denser interdependencies. What Krieger describes is a structural feature of scale.

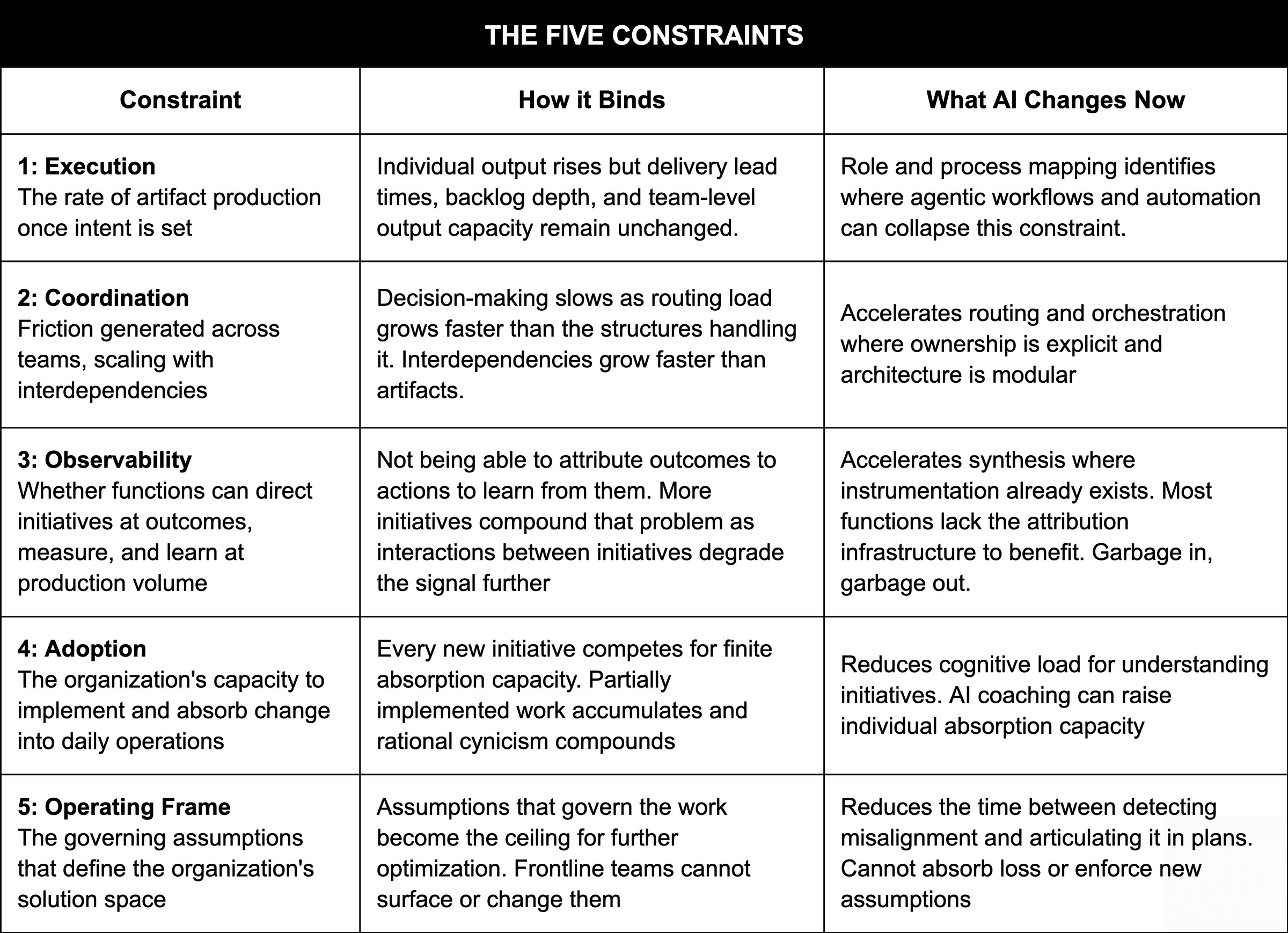

The Hierarchy of Constraints

Krieger’s bottlenecks are not random. They follow a predictable sequence that applies across every scaled organization attempting to absorb execution abundance.

All five constraints are present, though only one is binding at any time. As execution accelerates, pressure moves upstream. Resolving the binding constraint releases more outcome gains until the next constraint in the hierarchy binds.

Why Scale Changes Everything

At small scale, the five constraints are tightly coupled to the workflow and team running it. A founding team of ten has no merge queue problem, no coordination overhead worth naming, clear and shared signal on what is working, no adoption lag between decision and behavior, and an governing assumptions that can be revised in an afternoon. Individual execution gains compound directly into organizational speed because the other four constraints are resolved quickly around the normal workflow.

Scale breaks this in a specific way. As organizations grow, three things happen simultaneously. Legible systems fragment: early signs that something is off no longer get picked up through shared context before they have to be named and escalated, and by then the insight has to travel through reporting layers that filter much of it out. Insight and authority drift apart: the people closest to the evidence lose proximity to the people with the power to act on it, and surfacing contradictions carries increasing cost as hierarchy steepens. Momentum accumulates: past decisions embed into architecture, hiring profiles, and capital allocation, making reversal a structural problem rather than a conversational one. The constraints were always there. What scale does is sever the connection between where they bind and where the capacity to resolve them sits, turning latent friction into active bottlenecks.

Lenny’s survey split between small and large companies suggests this decoupling is already underway. In smaller firms, execution gains still compound through the system. In larger ones, coordination, review, and approval overhead absorb them before they register as organizational output. The constraint has migrated.

III. The Constraint Roadmap

The next two to five years of organizational transformation will be shaped less by emerging model capabilities than by constraint migration. As execution collapses across functions, most firms are layering AI onto 1x infrastructure. Coordination, observability, and adoption become the binding constraints. AI multiplies artifact volume, but routing structures, attribution systems, and absorption capacity have not kept pace. Working through these constraints in sequence becomes the de facto organizational transformation roadmap.

Coordination

Coordination is the friction generated by interdependencies across teams as work is planned, built, and delivered. It scales with the number of interdependencies, not the volume of work. That distinction is what makes it hard to diagnose correctly.

When execution accelerates and organizational speed doesn’t follow, the instinctive response is more alignment: more meetings, more documentation, more cross-functional syncs. These address the symptom without touching the structure. When production speeds up, the volume of work requiring coordination grows faster than output does. As Rizwan Iqbal, a former colleague and now Director of Engineering at Superhuman, put it:

“Most of the time is spent orienting: understanding the system, aligning with other teams, and figuring out where changes are supposed to live. That’s not a tooling problem. It’s a routing problem.”

For organizations with clean ownership and explicit protocols, AI improves capture, routing, and orchestration. For those without it, AI accelerates documentation of the same underlying confusion. The tool moves faster, but the routing logic remains unchanged.

As agentic systems scale, the problem compounds. Cory Ondrejka, former tech advisor to Sundar Pichai, argues that the challenge of scaling agents mirrors that of scaling people as the number of interactions to be resolved grows faster than artifact production. Organizations become composite systems of humans and agents sharing resources, and coordination protocols that were previously socially inferred must become explicit.

Coordination does not require better alignment rituals. The structural fix is ownership clarity and modular architecture that reduce the number of interdependencies requiring active coordination. Where that structure exists, AI can help at three layers: converging the additional signals it generated as work scales, facilitating human decision-making both procedurally and substantively, and resolving some dependencies directly through agent-to-agent coordination.

Observability

Observability is the system’s ability to see the consequences of its own actions clearly enough to understand what changed and why. That only matters if the organization can connect what it did to what happened next. Most organizations are full of dashboards and still largely unable to tell which initiatives caused which outcomes.

Charity Majors, CTO of Honeycomb.io, states the organizational implication directly:

“Your ability to get any returns on your investments into AI will be limited by how swiftly you can validate your changes and learn from them.”

The same principle that makes software systems adaptive applies to organizations as well: generate enough signal to understand what the system is doing, what it is not doing, and why.

When observability binds, the organization produces results it cannot learn from. The instinctive response is to add review gates: more approval steps, more governance checkpoints. These slow execution without improving clarity. The problem is not a lack of oversight. It is a lack of evidence linking actions to outcomes.

Observability varies sharply across functions and the gap matters. Product and engineering teams have continuous passive signals from production systems. GTM teams have partial but improving visibility through conversion data and pipeline metrics. People systems remain the least observable domain in most organizations: episodic surveys with multi-week lag, manager narratives that reflect what gets surfaced rather than the full reality, and platforms built for records management rather than diagnosis. Most organizations can observe their software and their customers far better than they can observe themselves.

As initiative volume rises, the interactions between them multiply faster than the work itself. Clarity degrades not because the signal disappears but because the ratio of signal to noise collapses under volume. The fix is to apply the measurement discipline developed in more observable functions to the rest of the organization: shaping how work is planned, scoped, tracked, and assessed so actions can be linked to outcomes. AI can help realize this through new forms of data capture, transcription, pattern detection, and cross-system synthesis that reveal how work is actually unfolding across conversations, workflows, and decisions.

Adoption

When execution becomes cheap, organizations become saturated with partially implemented work. Features are built faster. Campaigns are drafted and launched more quickly. New internal tools and workflows are spun up in days rather than months. Launch becomes easy, while engagement, activation, and implementation remain constrained.

Adoption capacity functions as a form of work-in-progress limit, borrowed from Kanban. Every organization has a finite amount of new behavior, new tooling, and new process it can integrate into real work at once. Execution abundance increases pressure from every direction: leaders push transformation programs, cross-functional teams introduce new workflows, central functions deploy company-wide initiatives, and vendors release new capabilities into tools the organization already uses.

As the limit is consistently exceeded, partial implementation becomes the norm. Employees lose clarity on the organization’s actual priorities. Leaders struggle to reconcile competing initiatives or determine which work matters. Operational overload produces a predictable sequence: overwhelm, then cynicism, then compliance without integration. Each failed round increases the skepticism applied to the next.

Adoption capacity can be raised. AI coaching reduces the cognitive overhead of applying unfamiliar tools and processes under real conditions. LLM-connected knowledge systems help employees build a clearer mental model of the firm, its active initiatives, and how their work connects to them. The draw on capacity can also be reduced. Roadmapping against adoption capacity rather than build capacity makes the WIP limit explicit and forces the prioritization conversation most organizations avoid. Modular initiative design reduces how much capacity each change consumes by breaking programs into independently adoptable components.

These three constraints define the coming agenda for the people function: enabling coordination at 5x volume, building the observability infrastructure required to learn at production scale, and raising the adoption ceiling so throughput gains actually compound. This is the work inside the engine.

IV. When the Frame Becomes the Constraint

Every organization runs inside an operating frame: the core assumptions defining what it optimizes for, how decisions get made, what gets resourced, which structures are treated as fixed, and what behaviors get rewarded. Execution and the constraints that follow all operate inside this organizational solution space. They cannot change it. As the lower constraints are resolved, optimization within the frame continues until the frame itself becomes the constraint.

AI collapses the cost of optimizing within this frame. Execution accelerates, and every capability gained from the prior three constraints compounds inside the same operating frame. Chris Argyris called this single-loop learning: detecting errors and making improvements in workflow within an existing set of governing variables. He differentiated it from double-loop learning: surfacing, challenging, and replacing the governing variables themselves. AI removes friction from single-loop optimization. It does not initiate double-loop learning. Teams move faster inside the frame while the frame itself remains unchanged.

Competence accumulates around the frame’s assumptions. Success inside a logic reinforces confidence in the logic that produced it. Hiring profiles, incentive structures, and institutional memory encode those assumptions as defaults. The ceiling does not announce itself. It presents as a strategy question, a resource question, a talent question. By the time it is legible as a frame problem, the cost of revision has compounded.

Frame revision is not a calibration. It requires new products, market repositioning, architectural restructuring, operating model redesign, and terminating legacy work. These are not optimization moves. They require revising what the organization is for, how authority flows through it, and what prior bets it is willing to name as wrong. That is a different order of problem from anything the first four constraints demanded.

Meta’s AI pivot shows what changing an established frame can take. Through 2022 and 2023, Meta had publicly staked its AI positioning on open-source development and an academically oriented research culture, with Yann LeCun’s public skepticism about the LLM path to AGI defining its scientific identity. By 2024, closed frontier model development had pulled decisively ahead on capability. Llama 4’s April 2025 launch underwhelmed. By June, Zuckerberg had publicly expressed frustration.

Within a single quarter, Meta replaced governing assumptions of open-source research, academic ethos, and distributed decision-making with concentrated authority, closed development, and commercial deployment speed. It dissolved its existing research lab structure, consolidated operations under Superintelligence Labs, offered compensation packages reaching $100M to recruit researchers from OpenAI, Google, and Anthropic, and acquihired Alexandr Wang from Scale AI in a $14.3 billion deal. In November 2025, LeCun left Meta entirely. A company of Meta’s scale revised the governing logic of its AI division within a single quarter of committing to the shift. Most organizations of this size take that long just to name the problem.

As AI capabilities become broadly accessible, execution advantages built on them decay faster. Features that once required months of engineering effort can now be rebuilt by competitors in weeks. The cost of replication falls alongside the cost of production, so improvements diffuse quickly across competitors operating inside similar frames. Firms converge toward similar outputs not because they choose the same strategy, but because similar optimization logic produces similar ceilings. Optimization inside a saturating frame produces the unusual outcome of products improving even as differentiation narrows.

V. The Politics of Frame Revision

Revising the operating frame redistributes budget, scope, status, and future upside across identifiable people before it produces anything new. The leader whose team gets defunded, the executive whose prior bet gets named as wrong, the function whose authority shrinks as control shifts: these are not casualties of a strategy process. They are the people most invested in the assumptions being revised, and they sit inside every conversation where revision has to happen.

Working within the frame, political cost is manageable. Coordination redesign may create winners and losers, but the losses are bounded and the benefits are clear. Frame revision is different: the losses are structural, and the people being asked to absorb them must accept certain costs against uncertain returns. That asymmetry is what makes it categorically different from every constraint below it.

Each phase of revision carries a distinct political cost of participation. Surfacing frame misalignment before certainty exists requires tolerance for vulnerability. Admitting that existing logic may no longer fit signals loss of control before any alternative is ready. Naming the assumptions tied to identity, authority, and prior bets accepts questioning. In organizations that reward decisiveness this reads as weakness rather than rigor. Reallocating capital and authority to enforce revised priorities requires taking visible loss, producing identifiable winners and losers with named sponsors and making the political cost concrete and attributable. Embedding new defaults under real operating pressure requires allowing for experimentation, because acting differently under real stakes risks short-term variance in systems against a culture where stability is what gets rewarded. These are the structural costs of revision.

These political costs activate defensive routines across the organization. Chris Argyris observed that under threat, intelligent professionals consistently protect prior reasoning from public examination. Goals are defined unilaterally, conversation is controlled to avoid exposure of error, and structural tension is reframed as execution failure rather than examined at the level of assumptions. It is not incompetence or bad faith but a learned survival strategy in performance-driven systems. Under threat, professionals protect the reasoning that earned them authority, applying the same analytical capability that made them credible in the first place.

These routines are self-sealing. When a strategic contradiction surfaces, it is delegated to execution: resourcing, sequencing, communication, talent. The governing assumptions remain untouched. Single-loop errors are visible, discussable, and correctable. At the level of governing variables, defensive narratives justify themselves by explaining away disconfirming evidence. Governing assumptions become non-negotiable, non-testable, and often invisible, raising the political cost of revision.

Whether a firm can successfully revise its frame depends on its capacity to absorb the political costs of revision. That capacity rests on authority to change priorities and rebalance power, legitimacy to admit that prior assumptions no longer fit, and the ability to enforce revised assumptions through real trade-offs in capital, headcount, and governance. When that capacity falls short, declared change stalls and the prior frame reasserts itself through the normal incentives and routines of the organization.

AI can make strategic contradiction much easier to see. It models opportunity cost, surfaces inconsistencies between stated strategy and actual allocation, and makes misalignment legible. For leaders with authority to act, this reduces the time between detection and articulation. But AI cannot manufacture legitimacy, absorb structural loss, or enforce revised assumptions. It strengthens leaders who can already act and leaves unchanged the political conditions that determine whether they will.

Founder-mode environments are often described as low-politics because they demand strong alignment around governing assumptions. But politics does not disappear. It concentrates vertically around identity and proximity to the founder. The assumptions most resistant to revision are often the founder’s convictions, especially the ones that helped scale the company. In practice, the people with the clearest view of disconfirming evidence are often furthest from permission to surface it, because the political cost is correctly perceived as high.

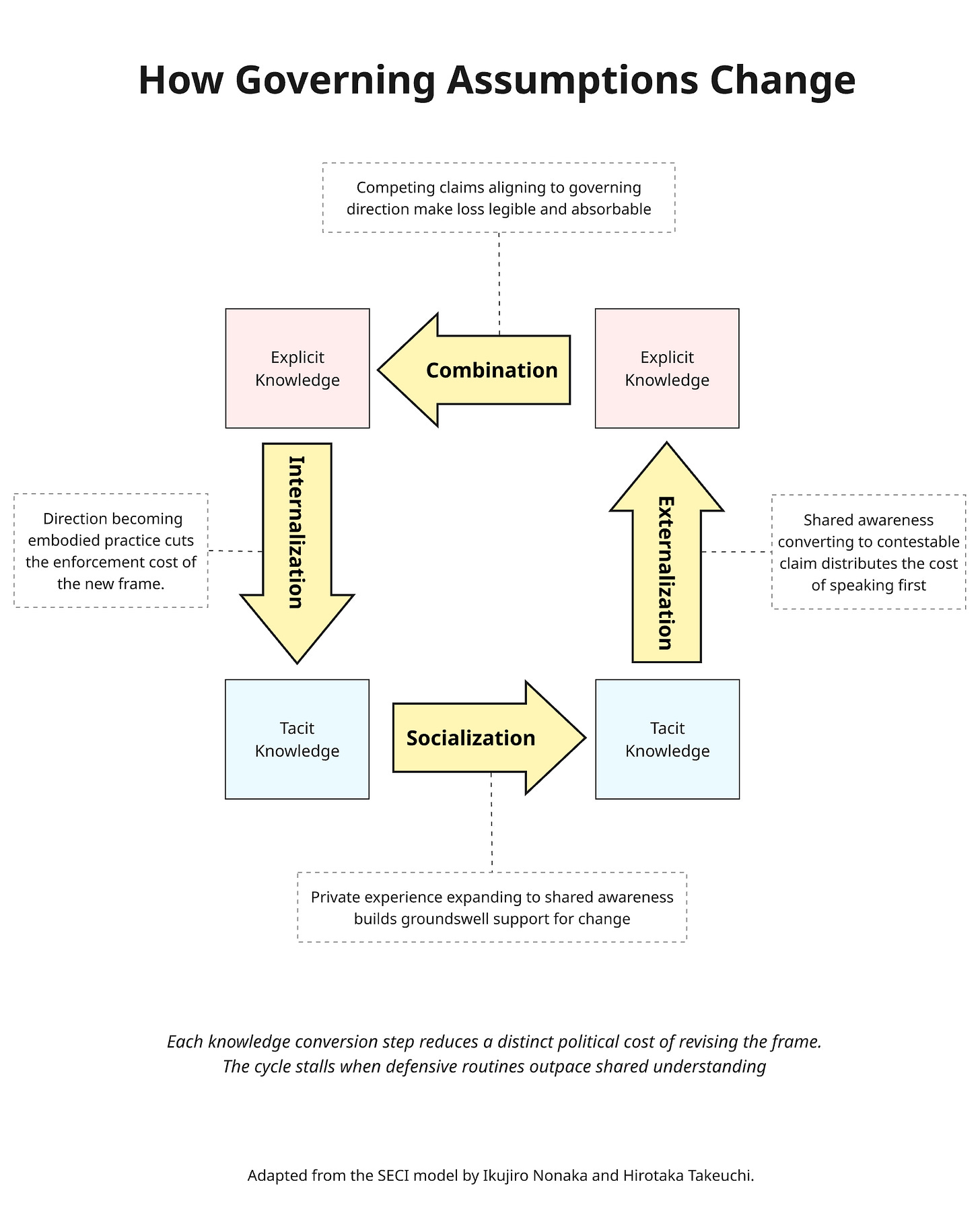

VI. How the Frame Changes

The operating frame is composed of both explicit and tacit knowledge. The explicit forms are visible: stated strategy, resource allocation, org structure, and the metrics that define what counts as success. The tacit forms are not: the judgments that feel like common sense, the practices that encode prior decisions long after anyone remembers choosing them, and the interpretive habits that determine which signals are taken seriously and which are explained away. Without tacit change, we have slideware. Without explicit change, revised assumptions never reach frontline decisions. Revising the frame requires converting between these forms across individuals and systems: surfacing tacit assumptions into something explicit enough to examine and contest, then embedding revised assumptions back into the judgments and practices that shape how work actually happens.

Argyris helped us see why revising the operating frame generates political cost. Nonaka and Takeuchi show how treating frame revision as an organizational learning process can reduce that cost. Their SECI model (Socialization, Externalization, Combination, Internalization) describes the sequence by which experience becomes structural change. Political friction impedes each step of knowledge conversion. Accelerating the flow reduces its effective force, not by eliminating cost but by moving shared understanding faster than defensive routines can organize.

Socialization

Socialization converts private experience into shared situational awareness. When people exposed to similar consequences begin to sense that existing assumptions no longer fit, that recognition spreads through proximity and repeated exposure before it is formally articulated. This broader awareness lowers the political cost for those who later surface misfit before certainty exists. Naming a problem without a solution, before any alternative is ready, is the moment of maximum vulnerability. When recognition is shared rather than private, revision lands on experience people already hold instead of as an abstract challenge to authority. Socialization does not decide anything. It makes articulation survivable.

Socialization is strengthened by widening shared exposure to real work and real consequences: collaborative workflows, recorded sessions, customer calls, behavioral data, and feedback signals that spread tacit understanding without requiring direct participation in every interaction. AI reduces the cost of aggregating and replaying this material at scale, widening access to consequences across the organization. But shared access is not the same as shared experience. Tacit recognition spreads through repeated, socially situated exposure to consequences, not through information distribution alone.

Externalization

Externalization converts shared awareness into explicit, contestable assumptions. What was previously sensed becomes something that can be named, examined, and revised. An organization cannot change what it cannot name. Governing assumptions carry taboo because making them explicit exposes what prior decisions were built on and whose authority those decisions reinforced. Structured discussion distributes that exposure across the conversation instead of concentrating it on the person who speaks first. That is the political cost Externalization reduces: not the cost of being wrong, but the cost of being the one who says it.

This phase is strengthened by institutionalizing forums where competing interpretations can be contrasted, assumptions surfaced, and trade-offs made explicit without immediate penalty. AI can support this phase by surfacing inconsistencies between stated strategy and actual allocation, prompting assumption articulation, and synthesizing divergent interpretations without deference to status or taboo. It cannot remove the political cost of the response once assumptions tied to identity, authority, or prior bets are made explicit.

Combination

Combination converts competing interpretations of revised assumptions into aligned strategic direction. In this phase, frame revision becomes consequential through explicit trade-offs across roadmaps, resource allocation, and governance. The shared awareness and conceptual clarity of earlier phases provide the rationale required for these difficult choices. Programs are terminated. Resources move. Decision rights shift. When trade-offs are explicit and integrated rather than implicit and fragmented, loss is legible. This is more absorbable than loss that feels arbitrary.

Direction stalls when plans are revised but budgets, incentives, and governance remain untouched long enough for the existing allocation of capital and authority to continue governing by default. AI compresses this phase by rapidly generating and stress-testing coherent, cross-domain plans, modeling opportunity costs, and surfacing trade-offs that would otherwise remain politically unsayable. It lowers the friction of producing integrated direction. It cannot absorb the political cost of the trade-offs that direction requires.

Internalization

Internalization converts articulated direction into organizational capability. Explicit principles, decision rules, and strategic shifts become patterns of action under real operating pressure. It completes the frame revision when local actors make trade-offs consistent with the revised frame without consulting the document, because the logic has become reflex. This phase reduces the political cost of enforcing the new frame. Distributed experimentation seeds examples of the revised assumptions applied under real conditions, building the social proof that pulls adoption rather than requiring it to be mandated. The more internalization spreads through example, the less authority has to be spent enforcing compliance.

AI accelerates Internalization at the level of individual practice. AI coaching embedded in decision-making and reflection reduces the cognitive overhead of applying an unfamiliar frame under pressure. Surfacing examples of the revised logic applied well creates social proof across distributed teams without central curation. Behavioral drift detection gives leaders earlier signal when local decisions revert to prior frame logic, reducing enforcement cost before that drift hardens into a norm.

The end of one cycle seeds the next. Internalization completes as the revised frame becomes reflex, and that reflex immediately generates new tacit experience. Revised assumptions surface new constraints, create new misalignments, and expose trade-offs that were previously invisible. That experience becomes raw material that Socialization converts in the next round. This frame revision cycle once took years in scaled firms. Execution-abundant markets are compressing it to months. The organizations that thrive are not those that get revision right once, but those that build the capacity to keep revising.

Enabling Conditions for Revision

The revision cycle runs on accurate perception. For signals to challenge the frame rather than be absorbed by it, two conditions have to be in place. Without them, Socialization spreads noise, Externalization devolves into opinion, Combination resolves around power, and Internalization reinforces the prior frame.

The first is falsifiable signal: evidence designed to detect when governing assumptions are wrong, not merely when execution is underperforming. This is a higher bar than the operational observability described earlier, which indicates whether initiatives are producing results. A falsifiable signal indicates whether the assumptions underlying those initiatives remain valid. An organization can have excellent dashboards and zero falsifiable signal if everything it measures is optimized inside the frame. Nothing it tracks can indicate that the frame itself is misaligned. The strongest incentives are to avoid generating the one piece of evidence that could falsify the current strategy. The survey that would test the core assumption doesn’t get sent. The experiment that could disprove the thesis doesn’t get funded.

The second is interpretive talent: people capable of reading misfit signals at the level of governing assumptions, rather than translating it immediately into an execution fix. That requires domain fluency to distinguish meaningful deviation from noise, systems thinking to reason about feedback loops and second-order effects, an improvement orientation that treats outcomes as provisional rather than as verdicts, and metacognition to notice one’s own framing under pressure before defending it. Select for people who revise their own governing assumptions in the same way the organization must revise its own.

VII. The New Speed

Execution speed is the most visible benefit of AI. It produces measurable output, aligns with how individuals experience the technology, and generates immediate evidence of progress. That visibility makes it easy to measure and easy to value. It does not make it decisive.

Every organization in this audience is already moving faster than it was two years ago. Execution is accelerating across engineering, GTM, marketing, and operations. The gains are real, but they are increasingly mistaken for durable advantage.

As AI capabilities spread across competitors, execution speed no longer sustains differentiation. The cost of replicating what competitors build is falling alongside the cost of building it. Advantages that once lasted multiple planning cycles now compress toward parity within one. Throughput is now the entry condition for competing, not the variable that determines competitive distance.

Each organization needs to identify which speed addresses its binding constraint. Many companies are still barely using AI to improve how fast work gets done. For them, execution speed is binding, and improving it determines whether the organization stays default alive in a market where baseline progress is no longer optional. For those that have optimized execution but are not seeing outcomes follow, constraint resolution speed is binding: how fast coordination, observability, and adoption convert execution gains into sustained quality leadership until competitors similarly optimize. For those where outcomes are compounding but differentiation is narrowing, frame revision speed is binding: how fast governing assumptions change in response to disconfirming evidence, and whether those outcomes sustain differentiation or compress toward commoditization.

High throughput feels like organizational health. Smooth constraint resolution feels like winning. Both can be true while the frame that generates them races toward commoditization. The metric that signals success at one speed obscures the binding constraint at the next. It's not just a question of how fast, but of which speed matters. What follows is a diagnostic for each speed: internal signals that reveal whether a constraint is resolving, and external signals that reveal whether resolving it is still where competitive distance is being created.

Gear 1: Execution Speed

When an organization is producing below the baseline the market now requires, execution speed is the highest-leverage focus. Individual contributors are using AI to increase task throughput without that gain transforming team-level output. Work is produced faster and artifacts multiply, yet delivery lead times, backlog depth, and team-level output capacity remain unchanged.

Two measures track execution speed improvement. Cycle Time measures the interval from intent specified to artifact produced at quality standard: code shipped, campaign launched, candidate assessed, contract drafted. Process Completion Time measures the recurring processes the organization depends on: hiring loops, onboarding, campaign production, deal close. Both should compress as AI deployment reaches the team level. If they have not, individual productivity gains have not translated into team throughput. Execution remains the priority.

The external signal is in contrast to market delivery expectations. Is the organization shipping the features, practices, and pricing models that have become the market baseline? If not, execution speed remains the priority. If so, the constraint may have migrated.

Gear 2: Constraint Resolution Speed

When AI has transformed team throughput but organizational outcomes are not following, constraint resolution speed unlocks the next wave of competitive gains. The coordination, observability, and adoption constraints covered earlier in this piece are what bind at this stage. Execution gains are being absorbed before they reach organizational output.

Absorption Latency measures the time from when a change is directed to when new behavior appears consistently in operational data. This might be the interval between a new architecture decision and seeing it reflected in code structure, between a revised ICP and seeing it reflected in which deals get pursued, or between a new performance standard and seeing it reflected in promotion decisions. Tracking adoption without a quality standard mistakes coordination failure for progress. Most organizations cannot measure the start of absorption directly, because the moment a change is directed is not well recorded. In practice, they use deployment date as a proxy. The difference is that absorption latency begins when the organization commits to the change, while the proxy begins only once the change is launched. If the measured interval is not shrinking as execution volume rises, constraint resolution speed remains the priority.

The external signal is replication speed. Can the organization copy what competitors are building as fast as they can copy you? If competitors replicate your capabilities faster than you can match theirs, or you are consistently behind on features and practices market leaders have already validated, constraint resolution speed remains the priority. If replication is roughly symmetric, or you remain the sustained quality leader in the market, the constraint may have migrated to the frame itself.

Gear 3: Frame Revision Speed

When the organization’s optimized operations are producing market leadership but differentiation is narrowing, frame revision speed is what sustains competitive advantage. The governing assumptions directing that execution are producing a ceiling competitors across the market will reach at roughly the same time. The frame is the constraint, not the performance.

Revision Latency is the primary measure: the interval between the first observable structural contradiction and a measurable shift in capital, headcount, or architecture. The first timestamp is when disconfirming evidence becomes observable. The second is when allocation actually moved. AI analysis of collaboration data, planning artifacts, and allocation records can surface both. Without it, the first timestamp is reconstructed in hindsight, and the lag shrinks on paper rather than in reality.

Strategic Kill Rate and Reallocation Magnitude are the enforcement signals that confirm whether revision is structural or just declared. Kill Rate measures how frequently coherent work gets terminated because it no longer fits the governing frame. A low rate may indicate the organization cannot absorb the political cost of stopping work, leaving capital distributed across initiatives that no longer serve the governing logic while new priorities go underfunded. Reallocation Magnitude confirms the shift: the percentage of budget and talent density moved toward new governing variables within a planning cycle. Without it, revision latency is symbolic and the prior logic governs by default regardless of what the strategy document says. Who took the loss this quarter that proved the new direction was real? Most leadership teams cannot produce a name. When they cannot, the frame remains intact.

The external signal is a market trending toward commoditization. Competitors are trending toward outcomes of similar quality at similar cost, pricing power is eroding despite strong delivery, and category boundaries are shifting as competitors reposition or redefine what the market competes on. Successful frame revision opens new terrain to optimize against. But the firms that sustain advantage are not the ones that revise once. They revise faster than the market can converge on what they’ve built.

VIII. What Comes Next

This piece is written for the current interval, between AI-enabled work collapsing execution and agentic systems replacing the organizational structure itself. How long that interval remains open is uncertain. It may compress into two years or stretch to five.

HPP8: ‘The End of the Organization’ will speculate on what transitioning to agent-centered organizational structures means for competition, employees, and the people function. Frontier models arriving in the next 18 months would make agentic systems robust enough to deploy across most knowledge work functions. At their production volumes, the constraints this piece describes would bind simultaneously rather than sequentially. Agentic systems would also begin absorbing friction in coordination, observability, and adoption, compressing the hierarchy into months. As execution and constraint-resolution systems integrate, they can produce an operational flywheel with a speed and responsiveness that make the old organizational structure nonviable. That transition would fire a starting gun for a new stage of competition.

What this piece establishes is the prerequisite for this transition. The firms that work through the constraint hierarchy sequentially arrive at that starting gun with revision machinery already operational. The firms that don’t will face the stacked version: frame revision at maximum scale, on maximum assumptions, under competitive pressure, without the capacity the sequential path would have built. The race is lost before the starting gun fires.

For SaaS founders

You are already in frame revision, but you probably haven’t named it that way. The coordination and adoption work you are doing is important, but it operates inside a frame that the market is making obsolete. The market is telling you seat-based pricing no longer reflects the value you deliver, but repricing risks the revenue engine. The market wants AI automation but your management structures were built for human decision chains, and the people who built those structures are still in the room. Your product roadmap includes agentic features but your enterprise security posture was designed to be differentiating. None of these are product problems or go-to-market problems. They are changes to the governing assumptions that define your solution space, running without the revision infrastructure this piece describes.

The question is how you build the capacity to take on larger frame revisions before the market forces them on you.

For AI-native founders

The frame you are building now is encoding itself into every hire, partnership, and architectural decision. Execution is your advantage and it has been compounding. You are likely working through coordination and observability limits but the frame those constraints operate within is hardening faster than you can see it. The concentrated authority and conviction that makes disruption possible makes self-revision hard. You built for differentiation, assuming you would remain the differentiator. Your pricing model, talent structure, and market position all express that assumption. How long before a new team, less burdened by your accumulated technical and organizational debt, takes the next model and builds 70% of what you have created?

Founder control can absorb one revision. It does not create the distributed capacity to sustain repeated revision under pressure. The infrastructure required is organizational and political, not positional. The question is whether you are building it now, or whether each successive revision will cost more than the last because the organization was never designed to bear it.

Architect for the Organization

In 2014, I joined RelateIQ for my first Head of People role. Steve Loughlin, then CEO, told me I needed to be Product Manager for the Organization. I nodded in knowing agreement, understanding nothing. It took six years to work through what that actually meant: building a roadmap executives actually owned, scoping problems before designing solutions, and launching with leaders rather than at them. Getting the machine to run well. That was the right job for the constraint it addressed. The constraint has moved.

Previously, we assumed the operating frame was correct and needed to be operationalized and protected. Now we must assume the frame will require revision. The work becomes architectural: designing the conditions under which governing assumptions can change while execution continues to accelerate across every function the people team supports. The classical mandate to Hire, Motivate, and Retain is superseded by a new one: Accelerate, Release, and Revise.

The agentic transition concentrates its hardest problems in organizational development: not adjacent to OD mastery but identical to it. The people executive must guide this transition not because the role confers authority, but because the work requires expertise in navigating the political dynamics that determine whether revision is survivable, building the organizational learning processes that make it possible, and driving the behavior change that makes it real. That expertise has to be built, not assumed, and the learning curve gives months, not years.